Mulong Xie

Researcher & Builder & Founder·PhD in Artificial Intelligence

Human-AI Interaction & Dynamic Software

Research

What I work on

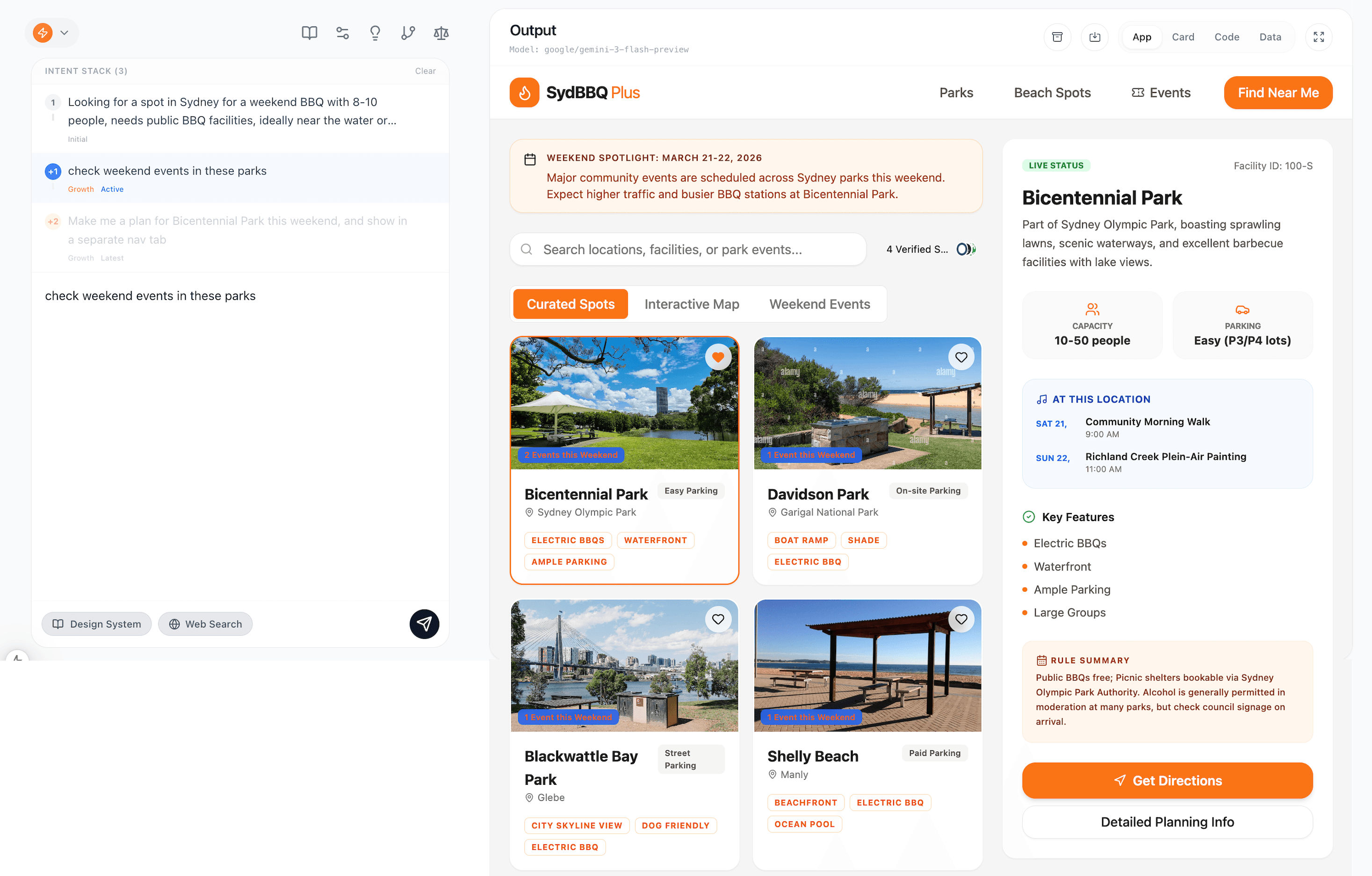

Human-AI Interaction & Software as Content

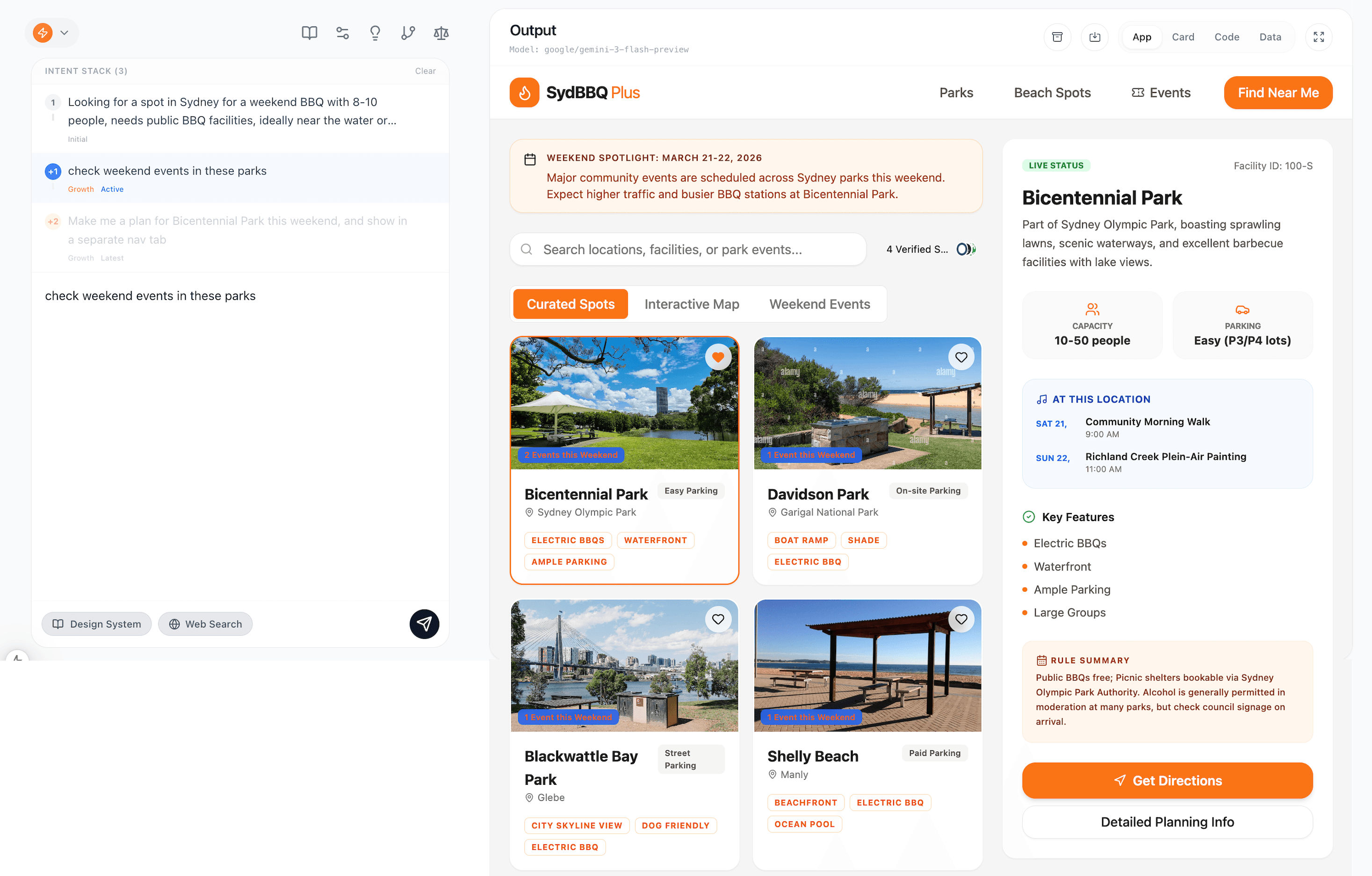

Rethinking the interaction layer between humans and agentic systems. The SaC paradigm proposes that AI should generate live, evolving UIs as agentic applications rather than static textual responses.

Dynamic Software

A new class of software where the frontend is generated on-demand and evolves through interaction — not shipped as a fixed pre-built. The backend is an agent system, not a static codebase.

GUI Agent

Autonomous agents that navigate GUIs to complete tasks across apps and platforms — combining visual grounding, cross-app action execution, and non-intrusive automation without code instrumentation.

Visual Intelligence for Productivity

Intelligently parse UI semantics from raw visual inputs and transform static artifacts into live outputs — element detection, layout understanding, design-to-code, and form digitization.

Selected Work

Featured projects

Software as Content (SaC)

A new human-agent interaction paradigm through generative, on-demand, evolving applications.

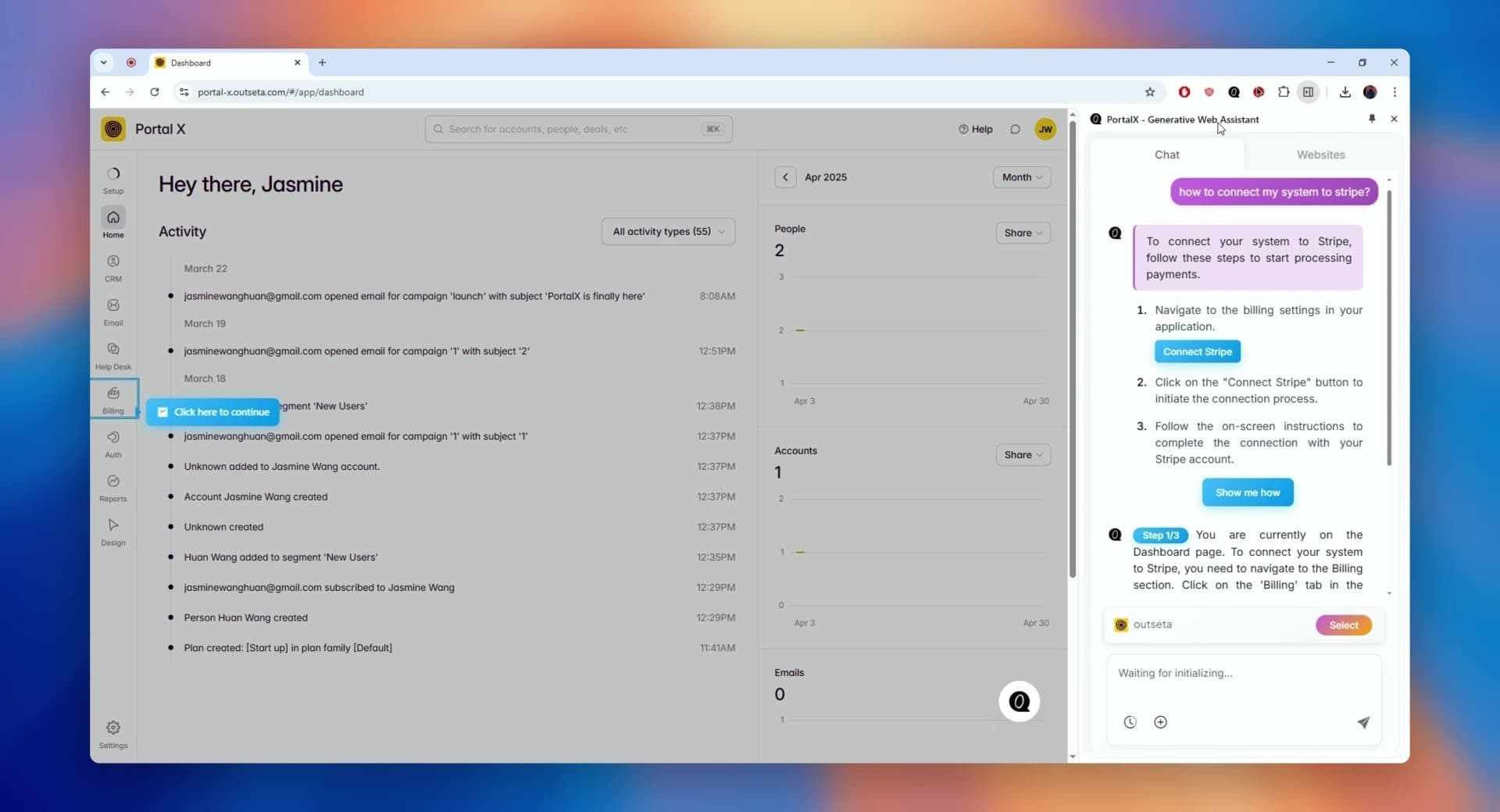

PortalX — Software Modelling

Autonomous agent to explore any given software & provide non-intrusive user assistance & automations. YC China 2025.

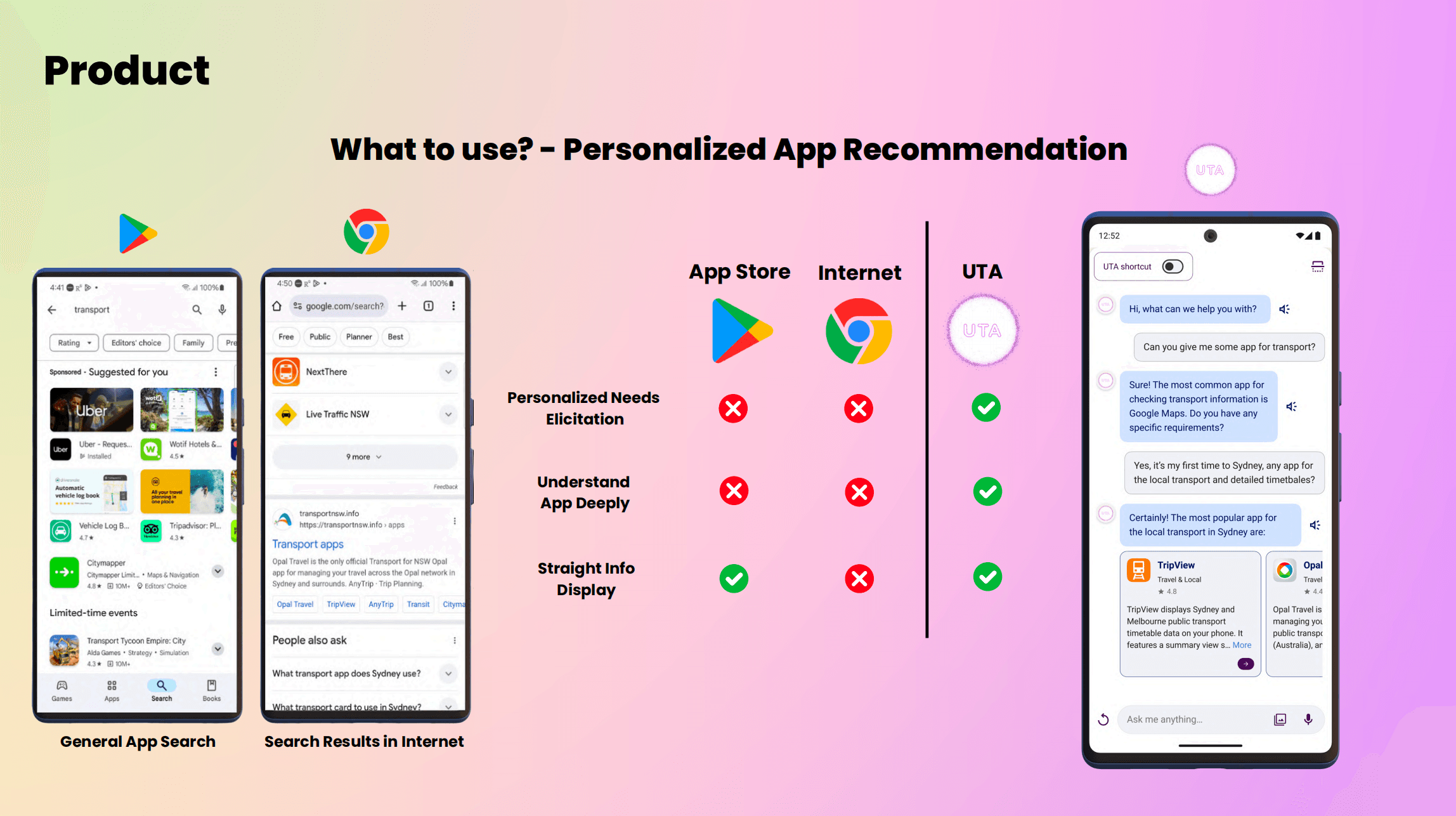

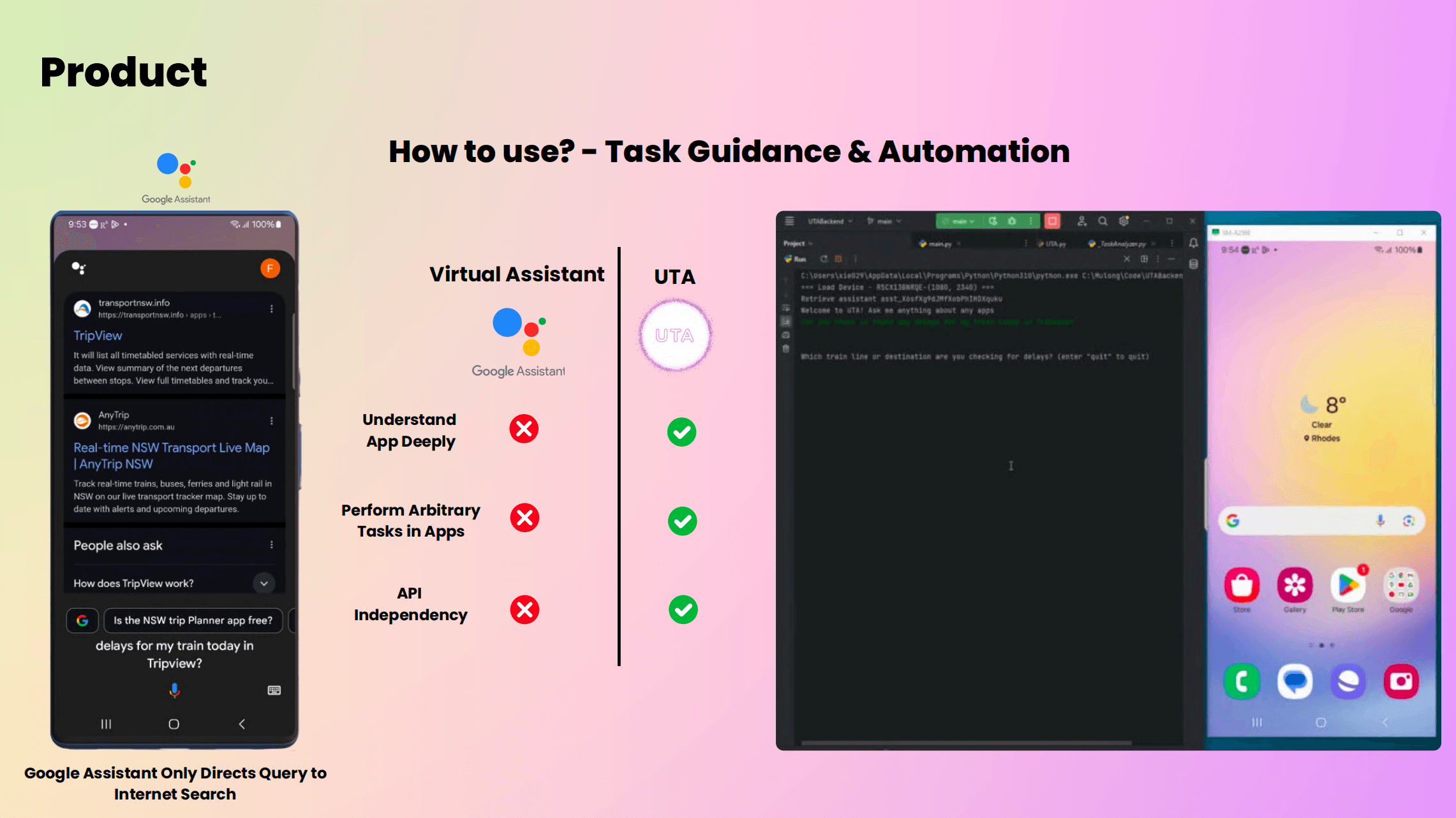

UTA — Universal Task Assistant

Mobile AI agent layer to understand apps and assist the user with app-intent mapping, step-by-step function guidance, and task automation.

PhD Thesis — Visual Intelligence for GUI Automation & Beyond

Three-year exploration and research on software understanding and automation even before the agent era.

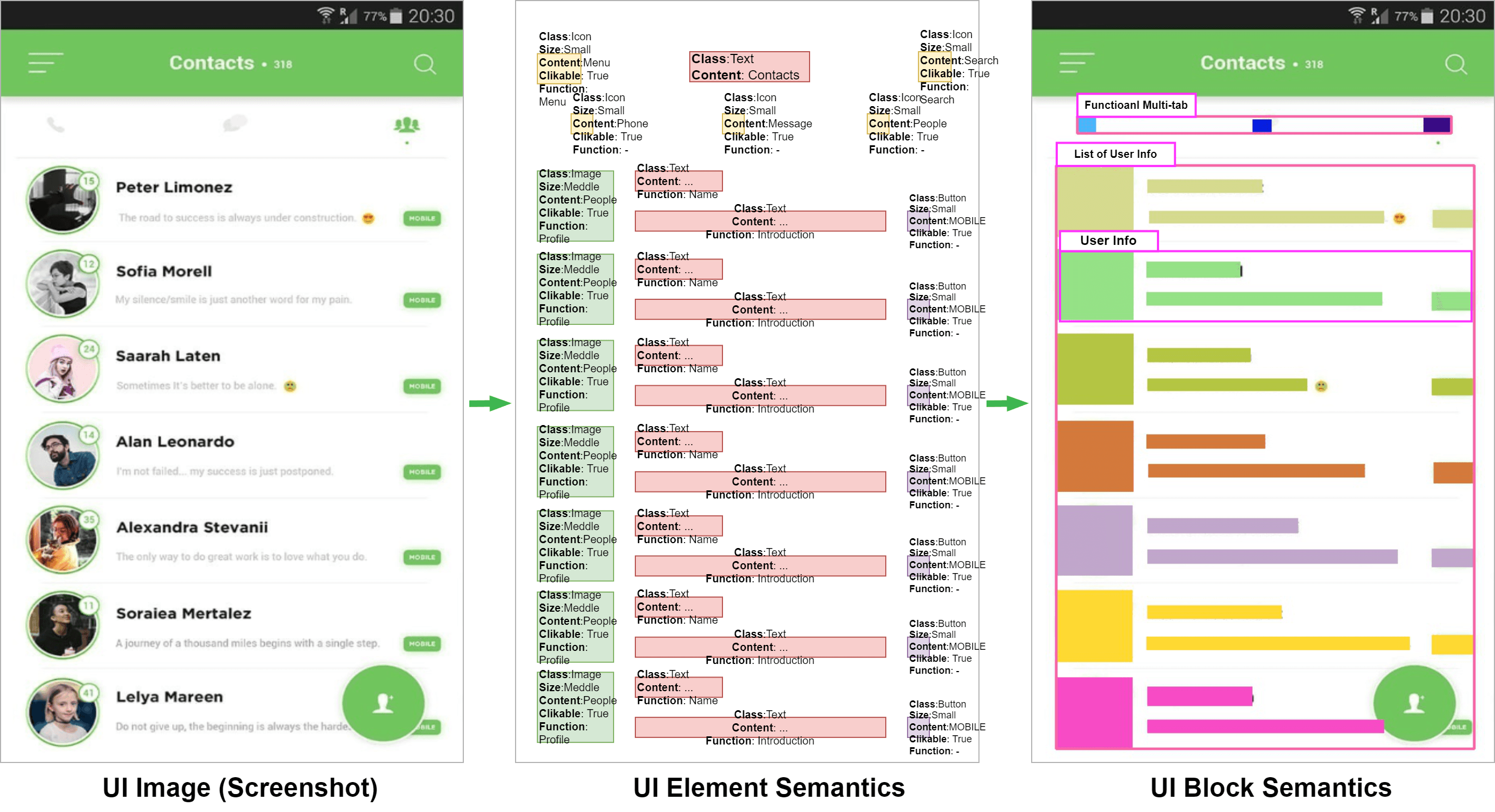

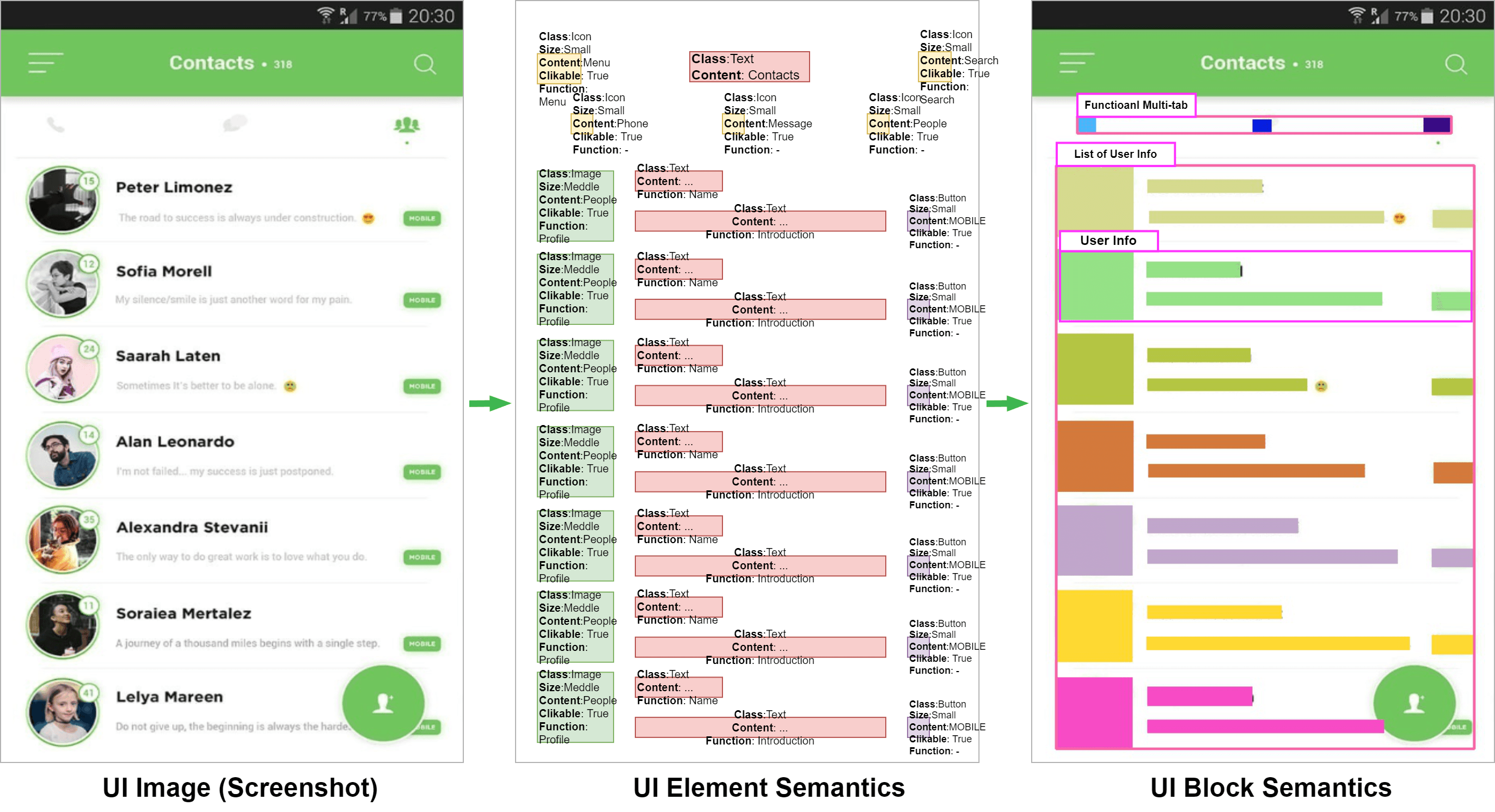

Visual Software Semantic Understanding

Unsupervised vision-based approach to analyze the spatial and semantic relations for the GUI elements and blocks.

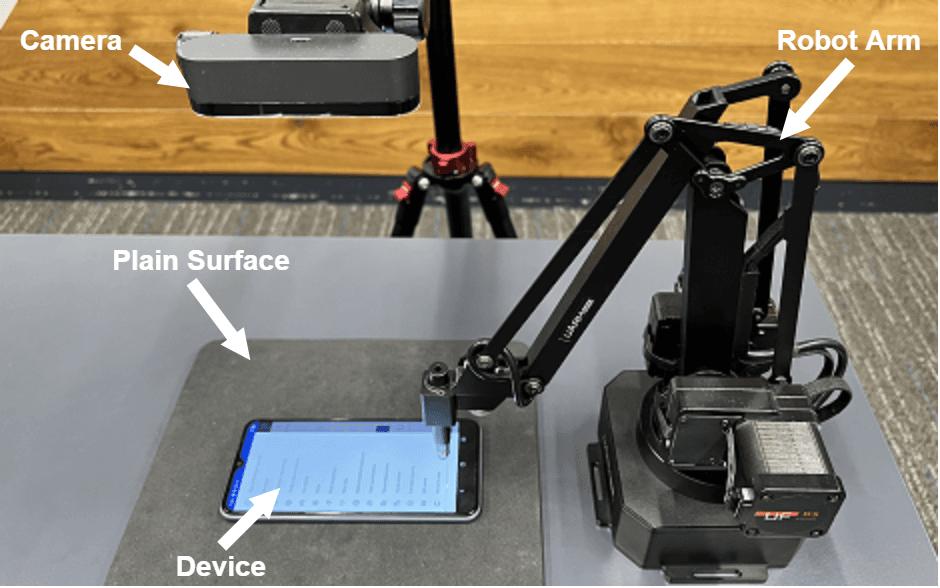

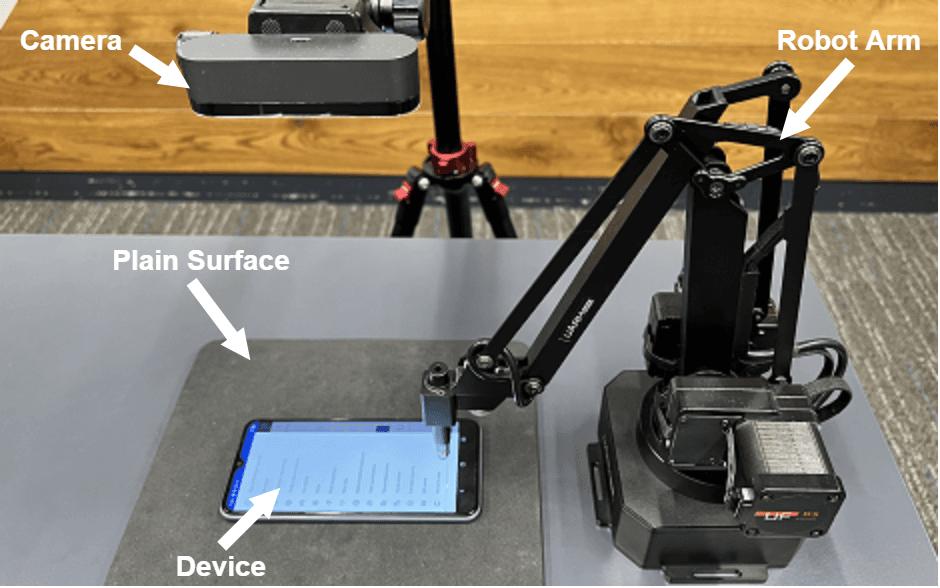

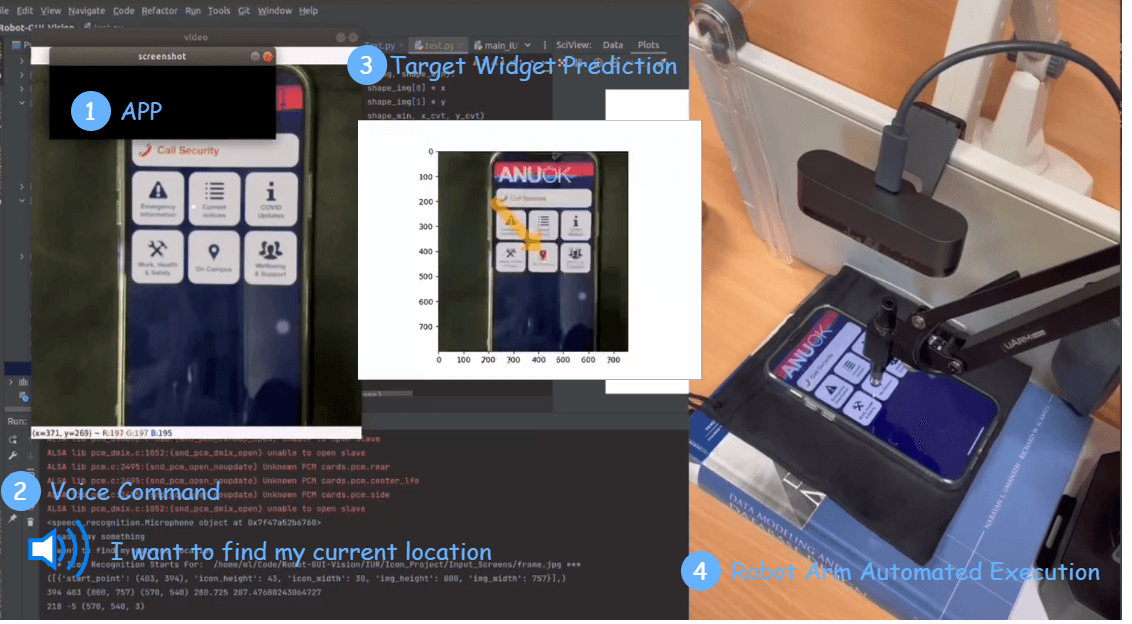

APP Voice Control x Robot Arm

Identify the target UI component in any app by user saying what they want in natural language, and use a Robot Arm to interact with the device automatically — a physical GUI agent before the agent era.

Background

Experience & Education

Researcher & Builder

2026 – PresentIndependentRemote

- Developing the Software as Content (SaC) theoritical foundation & open-source protocol

- Toward the Dynamic Software

Co-founder

2025.09 – 2026.03FellouHybrid

- Co-founding the world's first agentic browser, leading product design and research

- Over $30M fund raised

Founder

2025.01 – 2025.09PortalXSydney, Australia

- Leading the R&D and commercialization of the enterprise software intelligence automation agents

- Selected for Miracle Plus (former YC China) Fall 2025 — 1% acceptance from 5,800+ applicants

Research Scientist

2024.01 – 2025.10CSIRO's Data61Sydney, Australia

- Leading multiple research projects and commercialization on responsible AI & software engineering automation

- CSIRO Technology Innovation Award 2024

- On-prime Accelerator Prize-winning project

Postdoc

2022.12 – 2024.01CSIRO's Data61Sydney, Australia

- Leading research on intelligent software engineering and AI safety

- ACM Best Paper Award

Full-Stack Engineer Intern

2018.11 – 2019.01TF-AMDPenang, Malaysia

- Developed an internal document search engine as a full-stack engineer

- Recommendation letter from the Product Director

Ph.D in Artificial Intelligence

2020 – 2022Australian National UniversityCanberra, Australia

- Thesis: Visual Intelligence for GUI Understanding and Automation

- National Research Agency's PhD Top-up Scholarship

- HDR Fee Remission Scholarship

- Postgraduate Research Scholarship

(Honors) Bachelor of Software Engineering

2018 – 2020Australian National UniversityCanberra, Australia

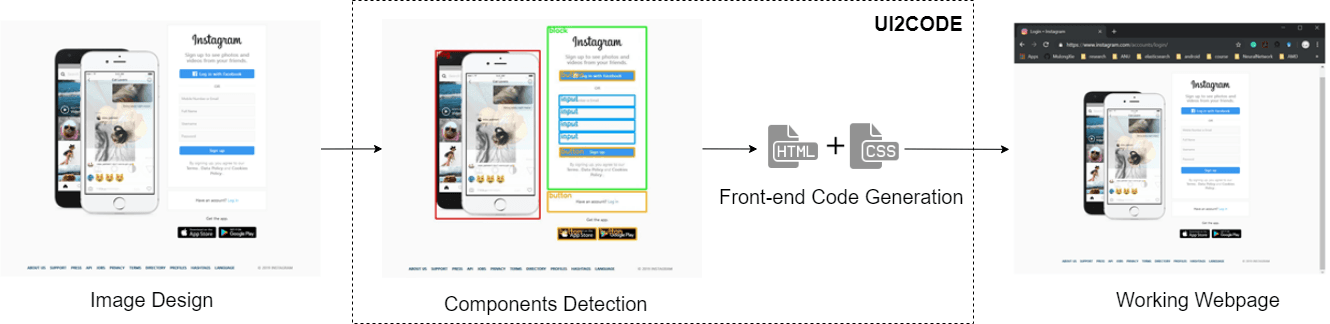

- Thesis: UI2CODE: Computer Vision Based Reverse Engineering of User Interface Design

- GovHack 2019 Australian Capital Territory, Runner Up (2019)

- Government Grant in Innovation Australia Capital Territory Competition (2018)

Bachelor of Intelligent Science and Technology

2015 – 2018Nanjing University of Science and TechnologyJiangsu, China

- Member of a key laboratory of unmanned aerial vehicles (2017)

- Class President (2017-2018)

Public Projects

All Public Projects

2026

Independent Researcher

Software as Content (SaC)

A new human-agent interaction paradigm through generative, on-demand, evolving applications.

2025

Founder

Fellou

Co-founded at Fellou — the world's first agentic browser. Raised over $30M in funding.

PortalX — Software Modelling

Autonomous agent to explore any given software & provide non-intrusive user assistance & automations. YC China 2025.

2024

Research Scientist

UTA — Universal Task Assistant

Mobile AI agent layer to understand apps and assist the user with app-intent mapping, step-by-step function guidance, and task automation.

2023

Research Scientist

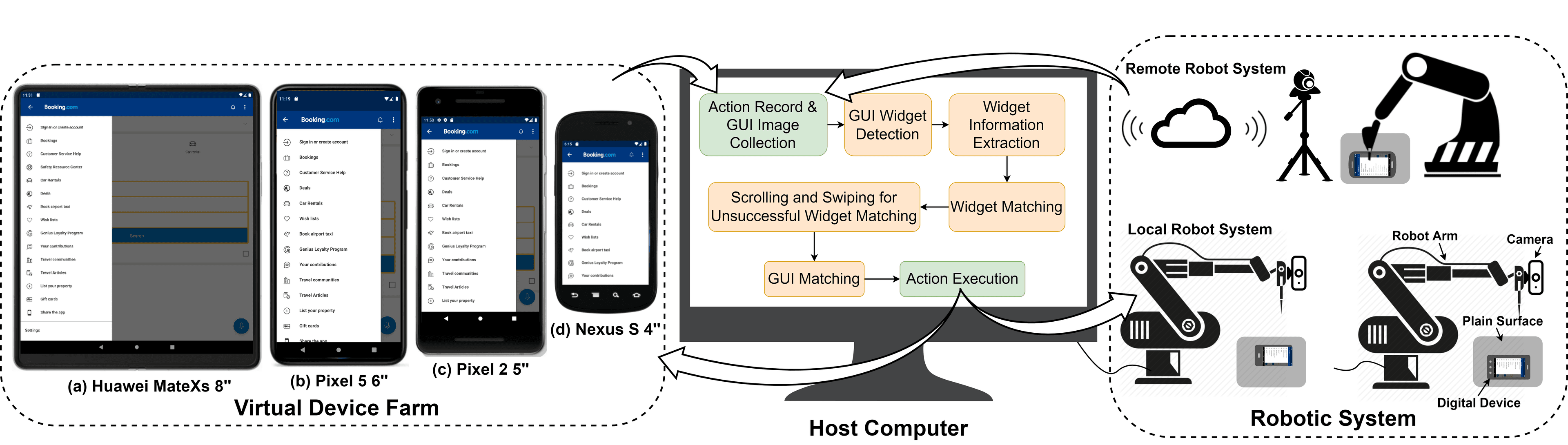

NiCro

Record once, replay anywhere. NiCro captures user actions on one device and re-executes them across iOS and Android at any screen size — purely vision-based, zero source code access required.

2022

PhD in Software Engineering & Human AI Interaction

PhD Thesis — Visual Intelligence for GUI Automation & Beyond

Three-year exploration and research on software understanding and automation even before the agent era.

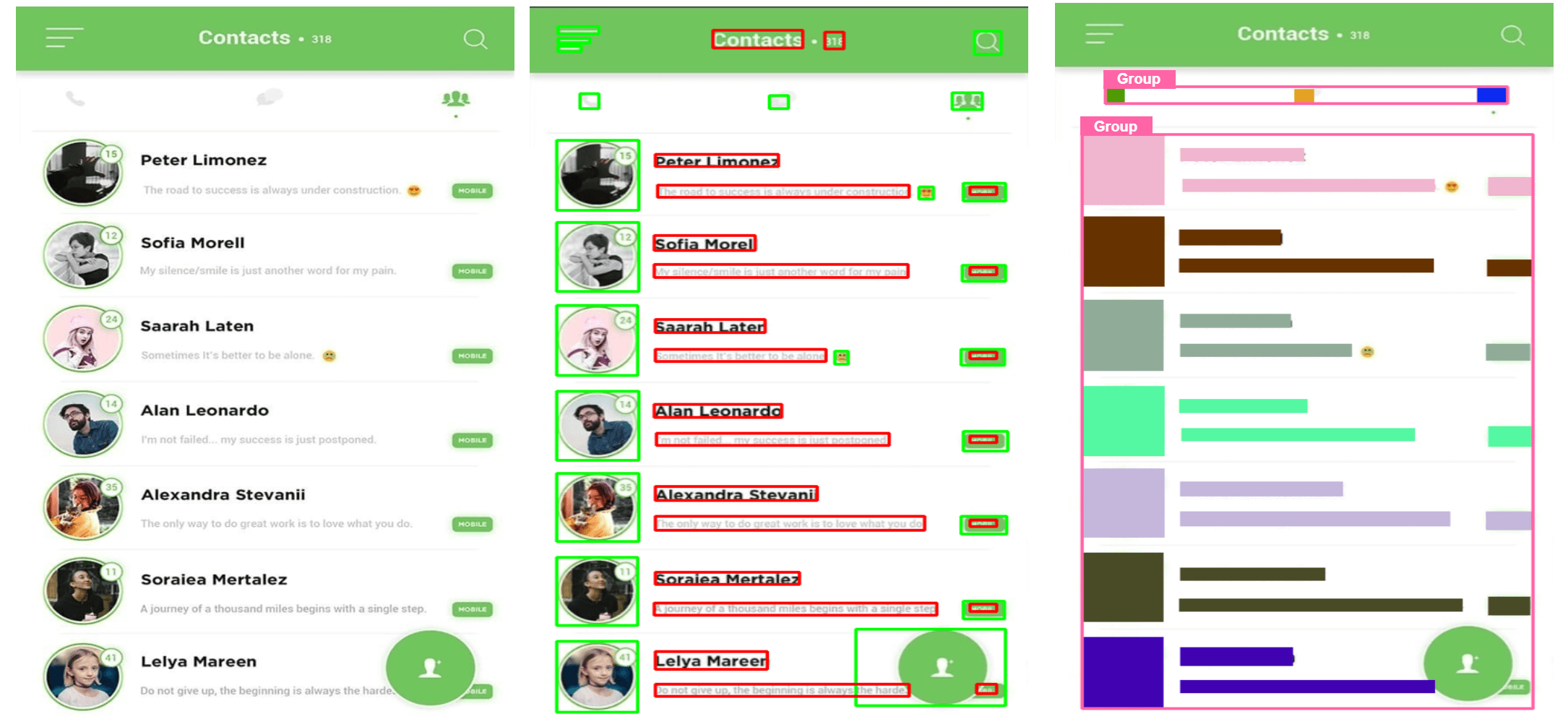

Visual Software Semantic Understanding

Unsupervised vision-based approach to analyze the spatial and semantic relations for the GUI elements and blocks.

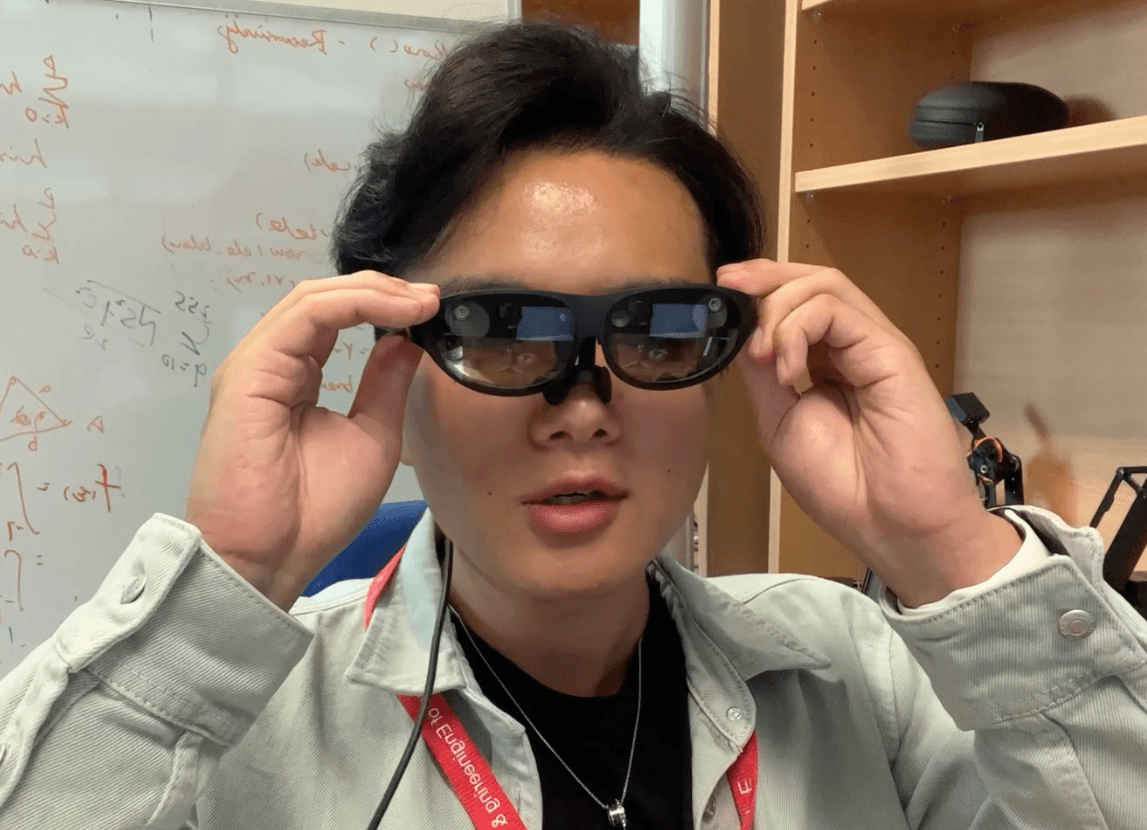

AR x Object Detection

What if AR glasses could understand the real world, not just overlay virtual objects onto it? This project integrates natural object detection with AR wearables — making the environment itself machine-readable.

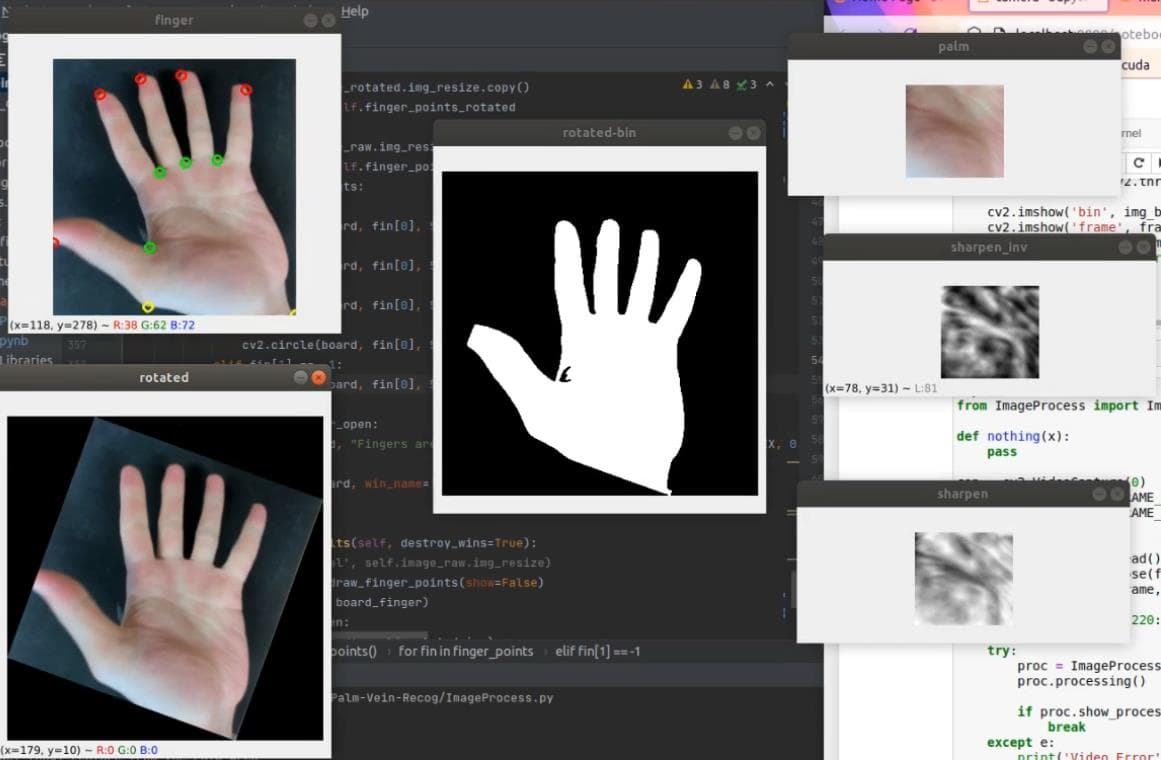

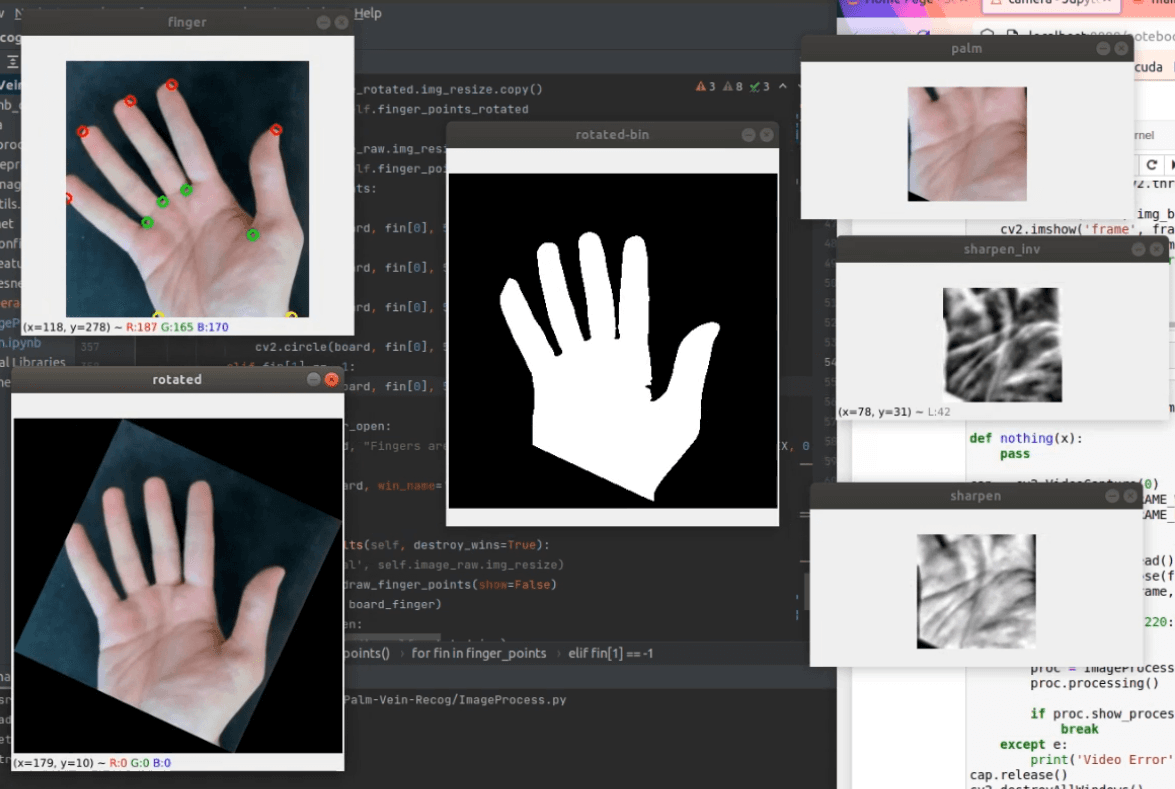

Palm Recognition

Training-free real-time palm region detection and feature extraction from hand images — using classical image processing to achieve ~18fps without any annotated data or deep learning overhead.

2021

PhD in Software Engineering & Human AI Interaction

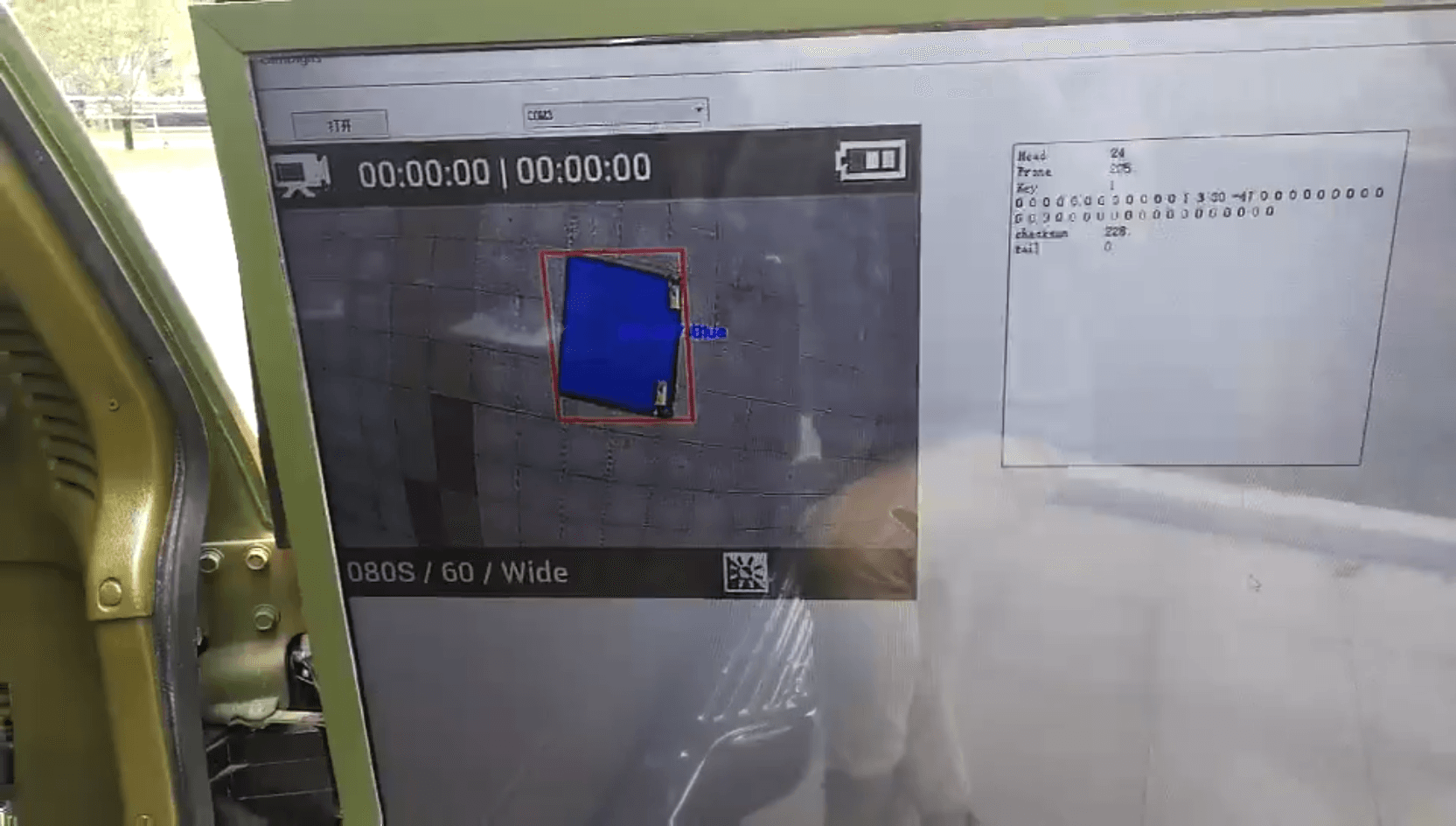

APP Voice Control x Robot Arm

Identify the target UI component in any app by user saying what they want in natural language, and use a Robot Arm to interact with the device automatically — a physical GUI agent before the agent era.

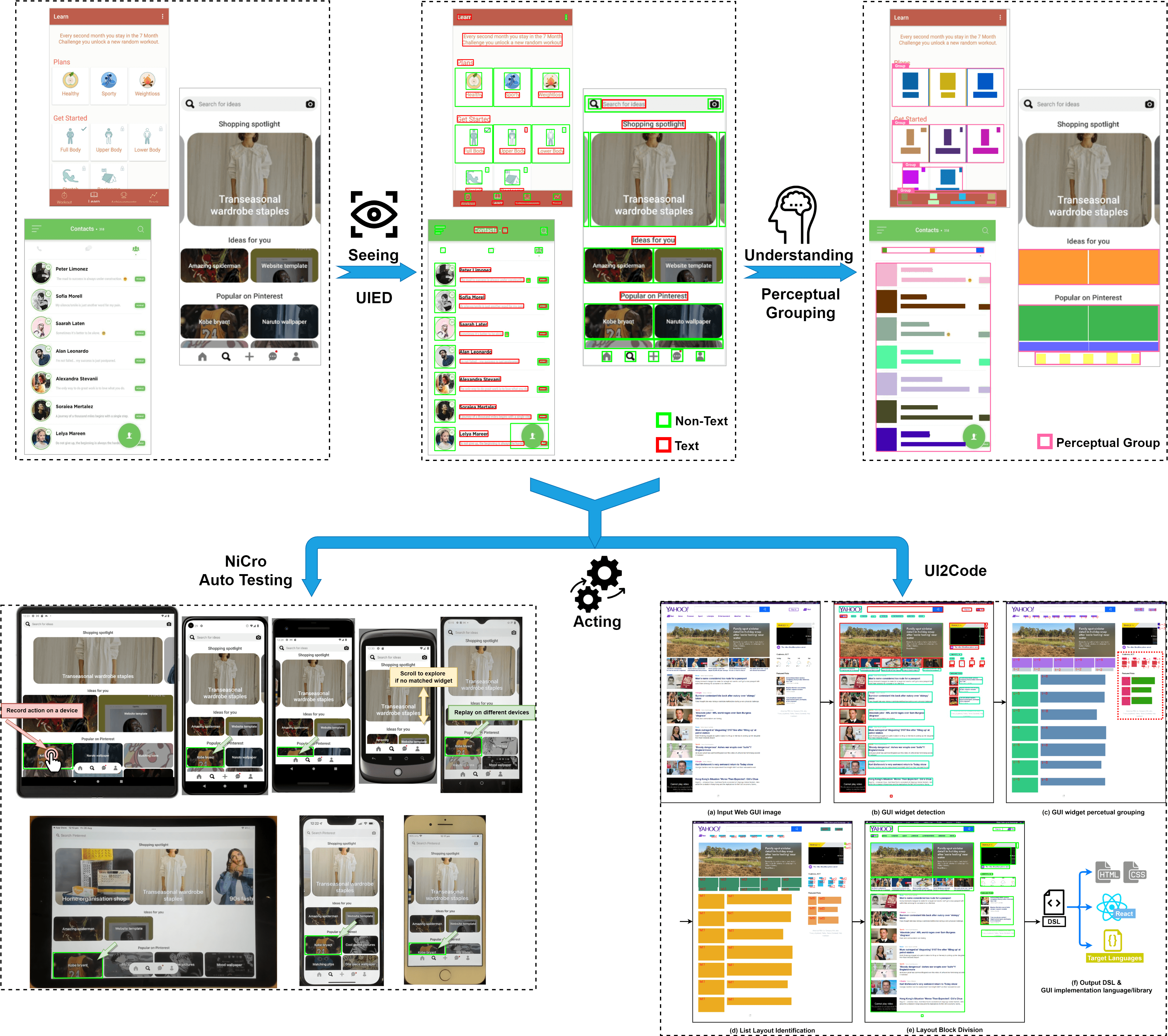

UI Component Perceptual Grouping

Humans don't see isolated buttons and labels — they see cards, lists, menus, and tabs. This project applies Gestalt psychology to automatically segment any GUI into perceptual layout blocks, the way a human would.

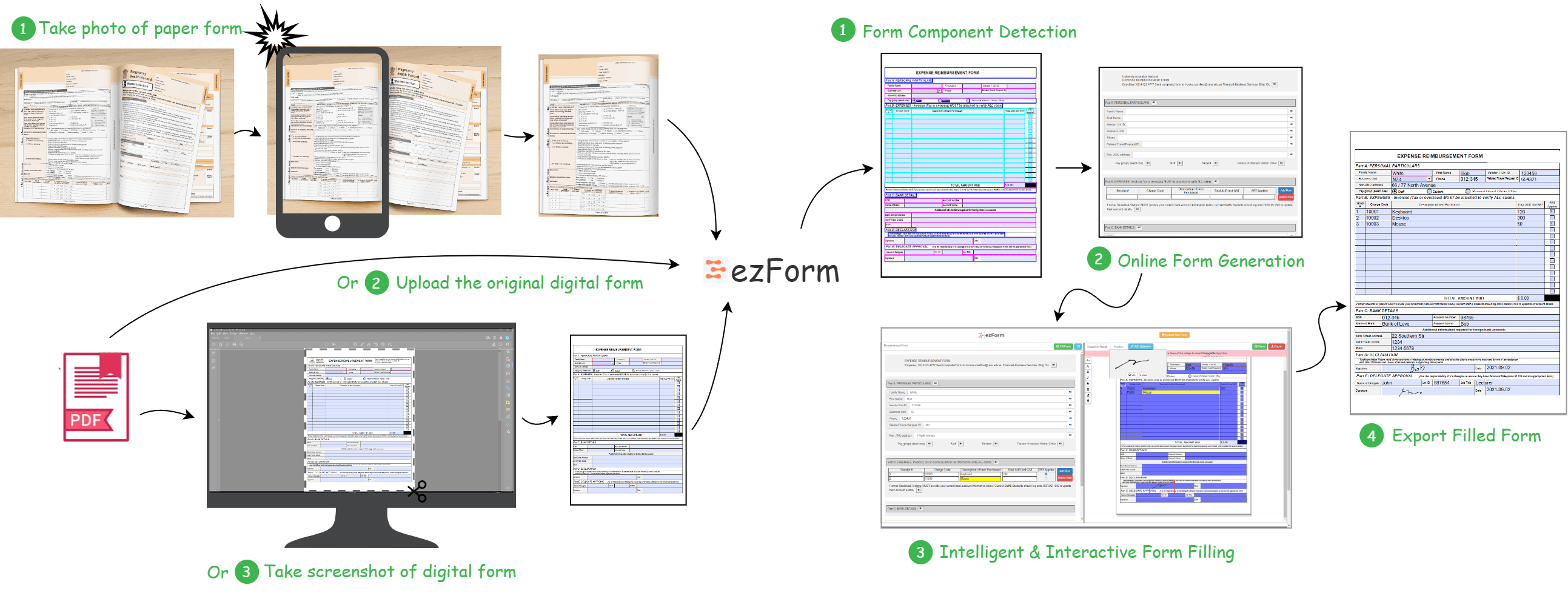

ezForm

Snap a photo of any paper form — ezForm converts it into a fully interactive web form automatically, using computer vision to recognise every field, checkbox, and layout structure.

2020

PhD in Software Engineering & Human AI Interaction

EasyD2C

Upload a UI screenshot, get back modular HTML, CSS, and React code — EasyD2C uses computer vision to reverse-engineer design images into structured, maintainable front-end code.

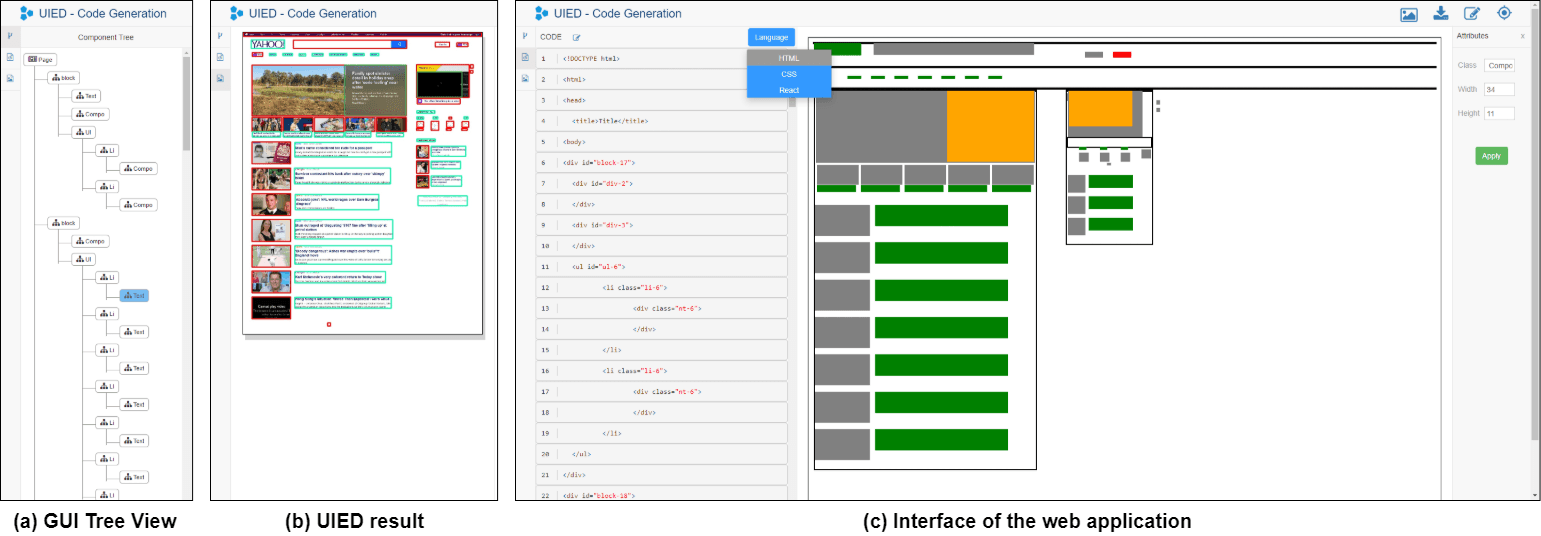

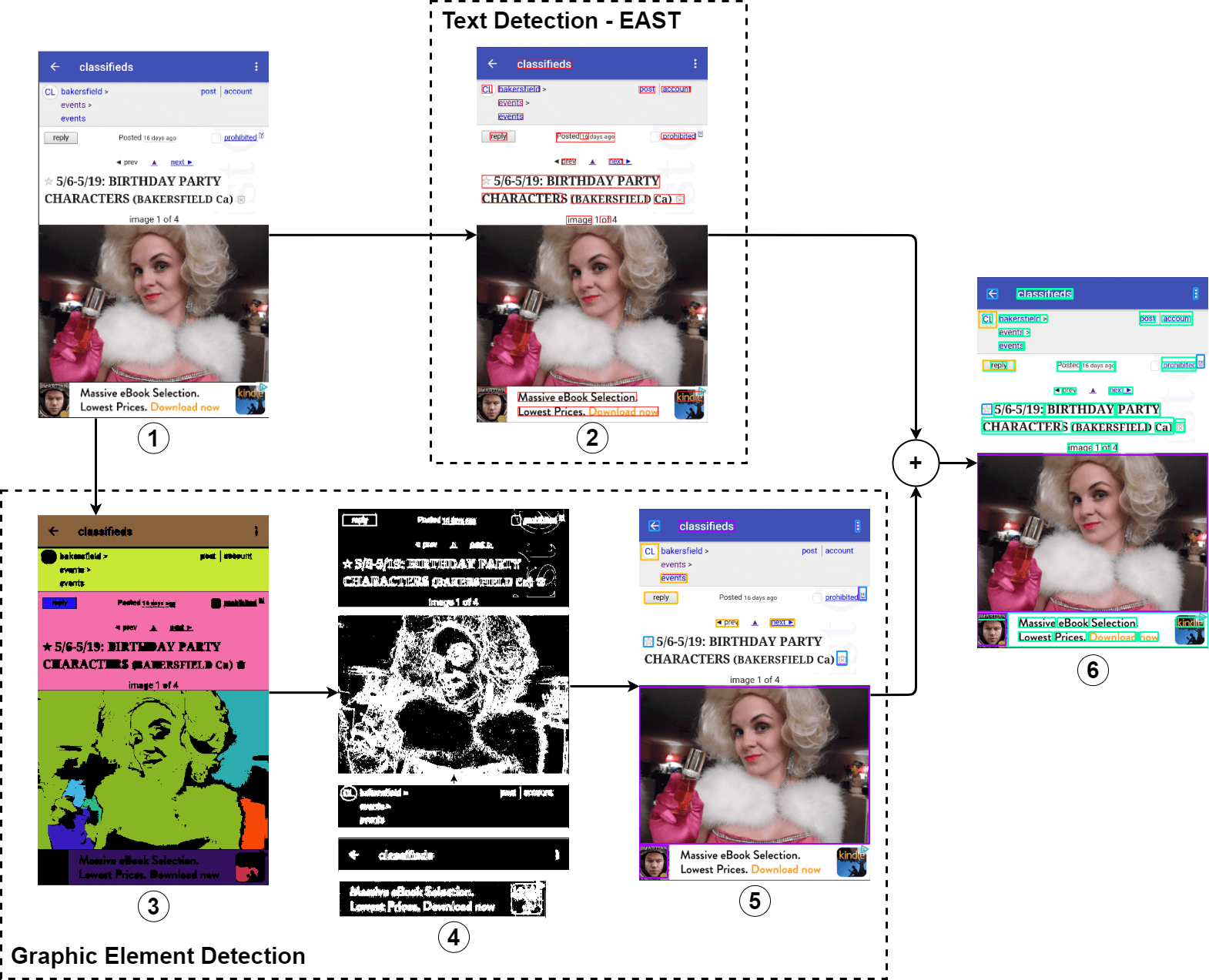

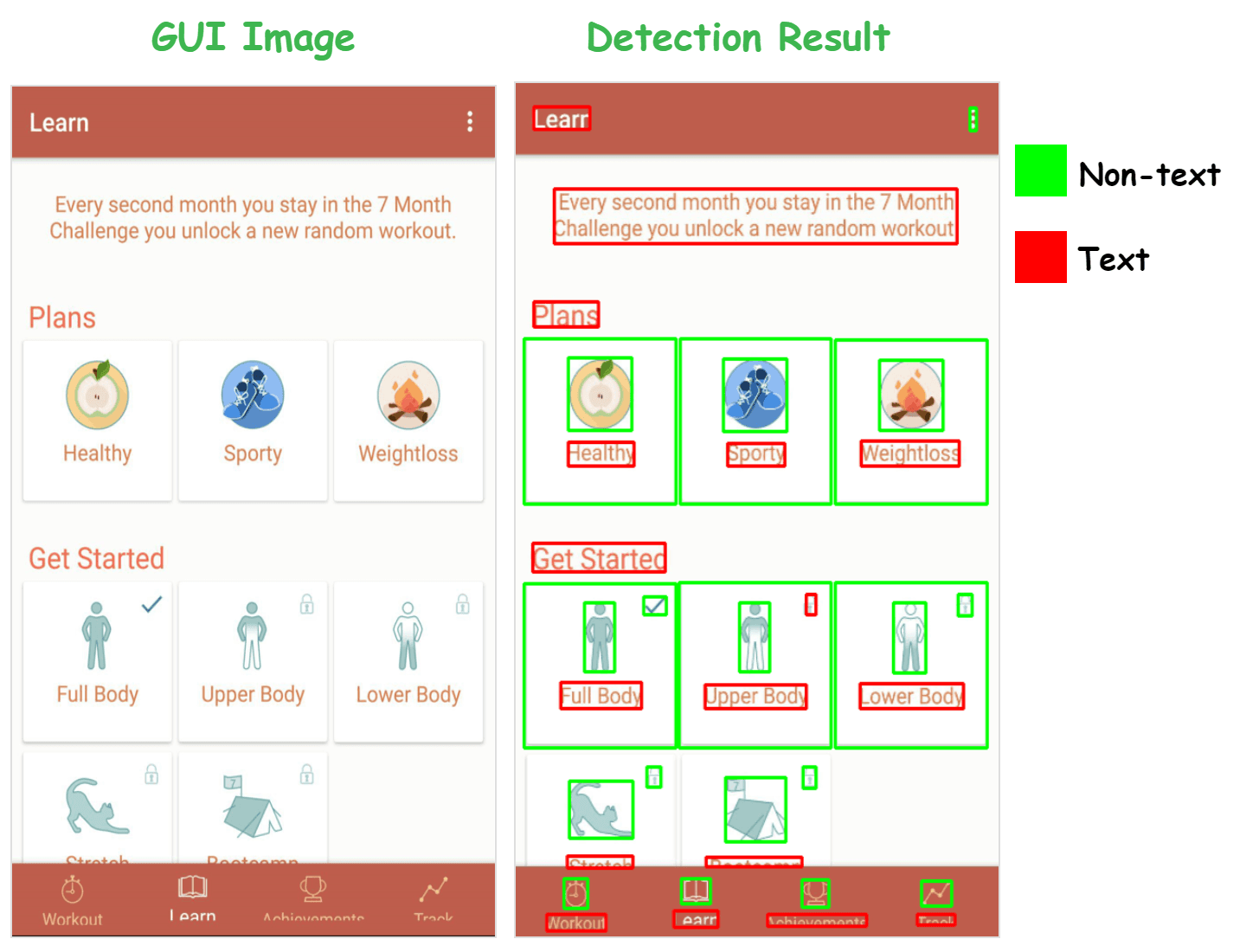

UI Element Detection (UIED)

Unsupervised detection of UI elements from any GUI screenshot — no training data, no labels. UIED combines Google OCR for text and a CV+CNN pipeline for non-text elements, handling both mobile and desktop UIs.

2019

Bachelor

GUI Image Code Generation

Computer vision-based reverse engineering of UI design — automatically converts a GUI screenshot or mockup into working UI code and a structured element tree, bridging the designer-to-developer gap.

2018

Bachelor

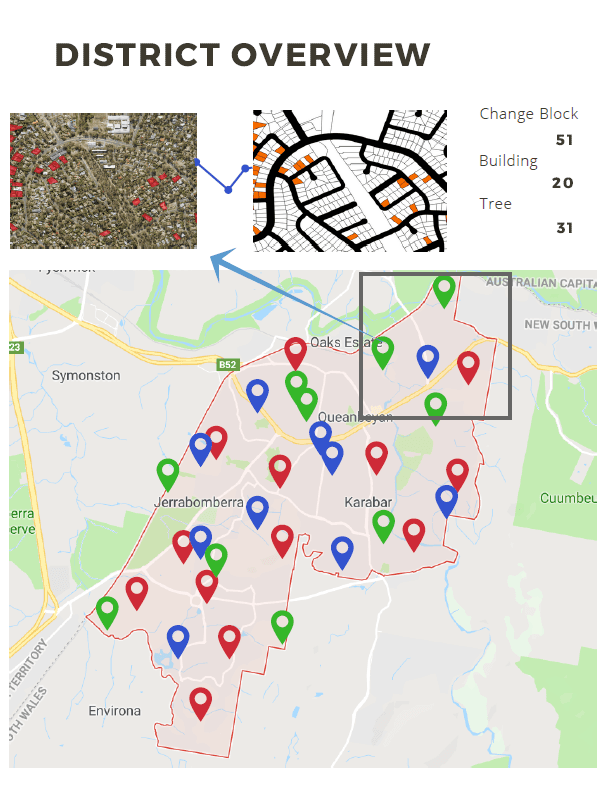

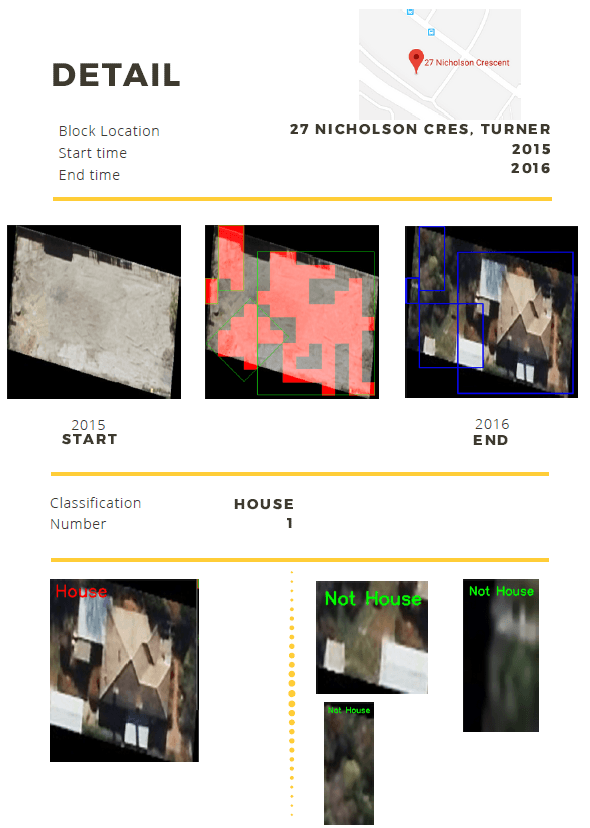

Geographical Change Detection & Report

Detect and report land-use changes — vegetation growth, new construction, land clearing — by contrasting satellite images of the same region across time periods using computer vision.

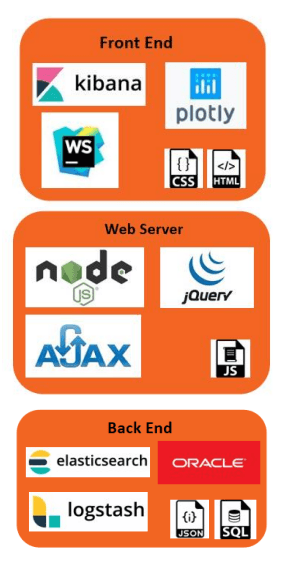

Universal Keywords Search Engine

Search across massive unstructured document databases — txt, PDF, Word — using keyword queries. Built on ElasticSearch with a user-friendly web interface.

2017

Bachelor

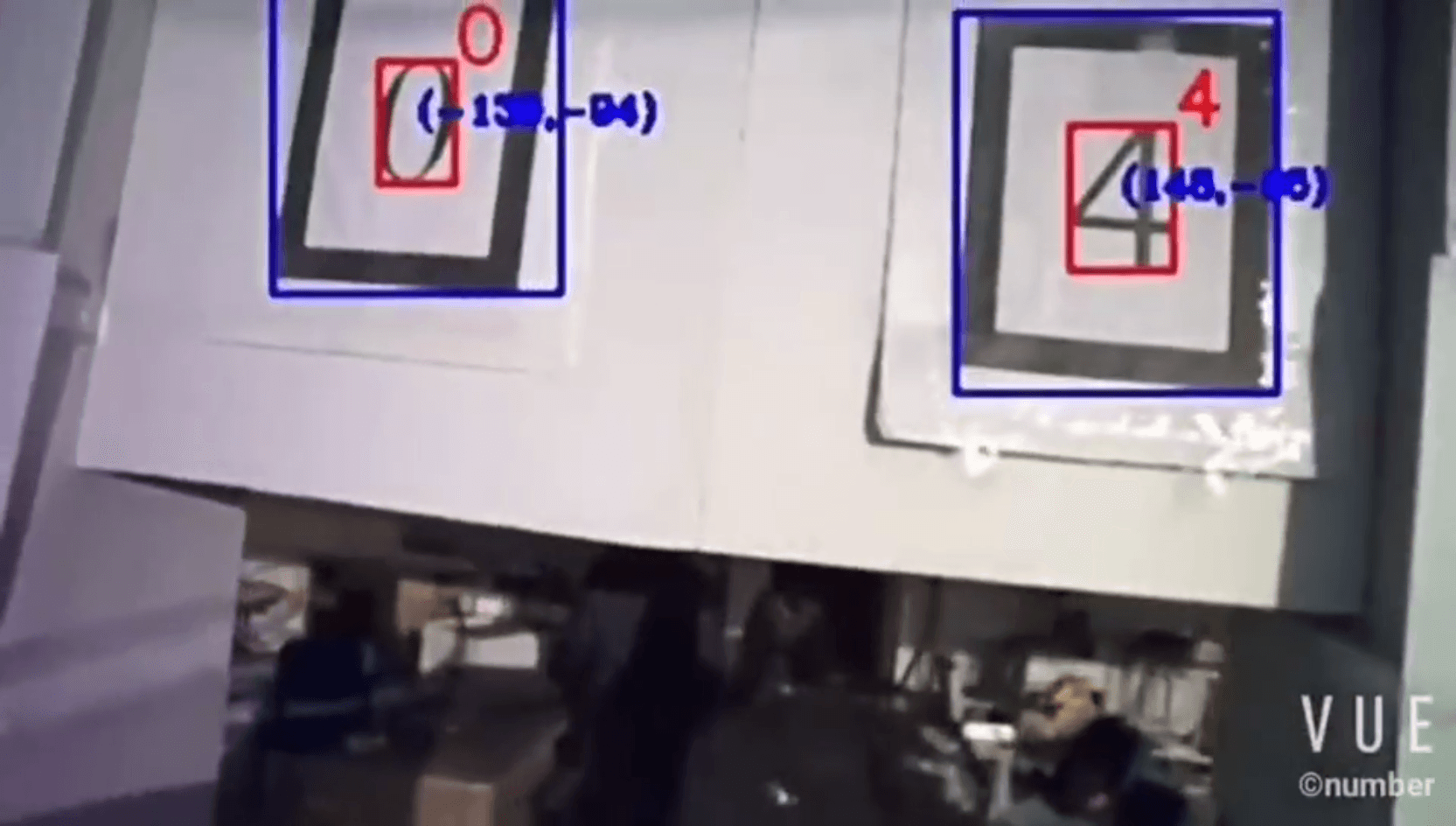

Digital Target Detection on UAV

Detect physical targets in natural environments and read the digital numbers on them — instructing an unmanned aerial vehicle to execute corresponding actions autonomously.

Color Target Detection on UAV

Detect coloured target regions in dynamic natural environments to instruct the UAV to complete actions — robust to lighting variation, motion blur, and complex backgrounds.